The danger of practical anecdotes

The use of practical anecdotes (e.g., "Google did this" and "Microsoft said that") has become an increasingly popular way of motivating experimental research. It now also emerges as one of the key comments in the review process and paper discussions at conferences: "I know a CFO who said this," "why not add an example of a company that used the intervention?," and "your experiment fails my sanity check!" In this post, I caution against making anecdotal evidence an integral part of the discourse of experimental research. Although it can serve as a great motivation for research, it can bias the discourse of experimental research down the line. As such, it should never form a criterion for evaluating such research and be treated exclusively as subjective commentary.

Two interrelated problems

Let assume that I conducted an experiment testing whether a new presentation format of performance information increases employee performance. I finalize the paper and stumble on a media source suggesting that Google has implemented this particular presentation format among its workforce and even reports it has helped improve performance recently. It does not take me long to find another example of an organization that has been considering a seemingly similar presentation format for a related purpose. Not long after, I find even more examples that I can relate my presentation format to, which makes me relatively convinced that my experiment matters! Quite an appealing way of thinking, isn't it? It may be appealing and offer a great motivation to start looking into the presentation format, but there are two notable issues with using anecdotal evidence in this way to go a step further, namely, argue for its general relevance.

Biased

The Anecdotal Fallacy

The use of anecdotal evidence, or isolated examples that rely on personal testimonies, to support or refute more general claims.

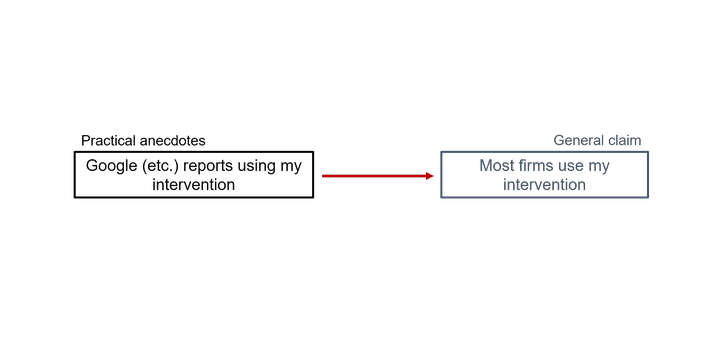

Anecdotal evidence can be used to (wrongly) make general claims. Even if you find practical anecdotes that closely resemble your experiment's intervention, the anecdotal fallacy suggests it carries little to no weight. Google might be a big and important company, but the media source is subjective and its claims have not been verified. If you believe that a relation between your experimental study and what Google supposedly reported via its PR people and journalists makes it relevant or important, then you relying on a practical anecdote to make an overly strong claim. This precisely what the anecdotal fallacy entails; we mistakenly use our personal experience or other's, which is subjective, to make a claim about what practice is generally like and thus how this may link up to the relevance of experiments.

Restrictive

A related problem is that anecdotal evidence can restrict the discourse of experimental research to real-world ideosyncrasies. Traditionally, a experiment is considered useful when it offers a strong connection with theory that is commonly accepted, plausibly generalizable, and that can be used in the broader empirical literature. Practical anecdotes that lay bare understudied and idiosyncratic interventions do have the potential for experimental research to advance and develop pre-existing theory. However, a similar argument can be made for interventions that never exist in practice or naturally-occuring settings. This last point highlights the broader issue surrounding use of practical anecdotes as a way to evaluate the relevance of experimental research. Even if we cannot link our experimental research to our or other's reported experiences in the real world, this, in and of itself, could make the experimental research interesting. Therefore, anecdotal evidence of interventions or phenomena in practice should never be a necessary condition for studying their effects.

Conclusion

Commentary, which often includdes practical anecdotes, is part of writing and the discourse of experimental research. It helps motivate research and makes it more approachable for a broader audience. However, this form of commentary should not be a necessary criterion for experimental research because it can bias and restrict its discourse. The alternative is straightforward and has been the status quo in the empirical social sciences for decades: To what extent does an experimental study offer an incremental contribution to a literature? In other words, to what extent does an experiment push our shared, commonly-accepted, and well-documented knowledge about accounting phenomena forward? One sentence explaining an experiment's incremental contribution to a literature should carry a thousand times more weight that a six-page introduction full with anecdotes.